|

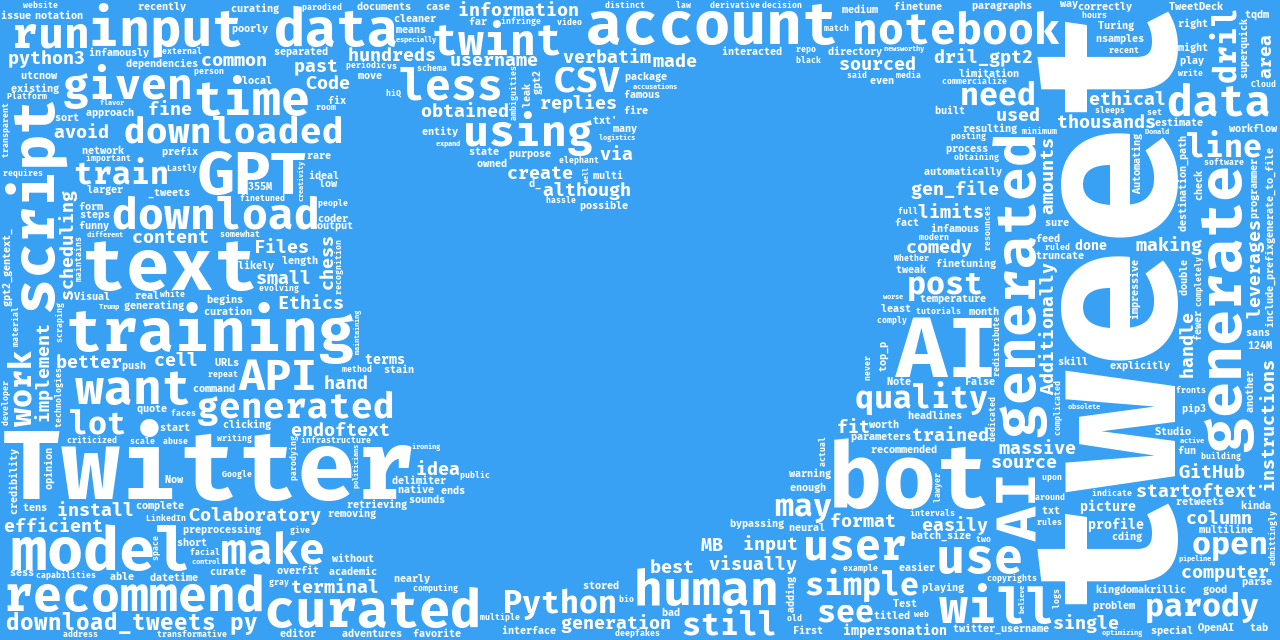

5/7/2023 0 Comments Gpt2 finetune end of text There is additional unlabeled data for use as well. We provide a set of 25,000 highly polar movie reviews for training, and 25,000 for testing. This is a dataset for binary sentiment classification containing substantially more data than previous benchmark datasets. The description provided on the Stanford website:

I will use the well known movies reviews positive - negative labeled Large Movie Review Dataset. This notebook will cover pretraining transformers on a custom dataset. Each parameter is nicely commented and structured to be as intuitive as possible. Like with every project, I built this notebook with reusability in mind.Īll changes will happen in the data processing part where you need to customize the PyTorch Dataset, Data Collator and DataLoader to fit your own data needs.Īll parameters that can be changed are under the Imports section. Knowing a little bit about the transformers library helps too. Since I am using PyTorch to fine-tune our transformers models any knowledge on PyTorch is very useful. Because of a nice upgrade to HuggingFace Transformers we are able to configure the GPT2 Tokenizer to do just that. Now in GPT2 we are using the last token for prediction so we will need to pad on the left. Since we only cared about the first token in Bert, we were padding to the right. In other words, instead of using first token embedding to make prediction like we do in Bert, we will use the last token embedding to make prediction with GPT2. With this in mind we can use that information to make a prediction in a classification task instead of generation task. This means that the last token of the input sequence contains all the information needed in the prediction.

Main idea: Since GPT2 is a decoder transformer, the last token of the input sequence is used to make predictions about the next token that should follow the input. If this in-depth educational content is useful for you, subscribe to our AI research mailing list to be alerted when we release new material. I wasn’t able to find much information on how to use GPT2 for classification so I decided to make this tutorial using similar structure with other transformers models. Hugging Face is very nice to us to include all the functionality needed for GPT2 to be used in classification tasks. This notebook is used to fine-tune GPT2 model for text classification using Hugging Face transformers library on a custom dataset.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed